I am very much on record of being extremely tired having the same discussion about AI over and over again. Over and over again, colleagues both on my campus and more broadly express deep anxiety, frustration, and anger about the ways that “AI” has been deployed by various tech companies and disappointment in students who have taken to it both innocently and for less appropriate reasons. All of which I share. Indeed, I have noted with increasing dismay how difficult students find reading and understanding relatively short texts, a problem exacerbated (though almost certainly not simply caused by) the use of AI to summarize for them. However, these conversations almost always remain just gripe sessions, ending without any real solutions or advice about what to do in the classroom. My own policy, thus far, has been to ban its use, clearly explain why I am doing so (in short: the goal of a history class is to learn to think and write on one’s own), and sometimes devote some class time to discussing it. I am 100% that such bans have not been entirely effective, though I do think that by taking time out of class to talk about it and explain my reasoning has been successful in lessening its more nefarious uses. That said, it is obvious to everyone reading about AI in higher education to anyone in the classroom that students are using it all the time and I am somewhat at a loss as to what to do about it.

So I had a bit of a different response than a lot of people to the recent publication of “Guiding Principles for Artificial Intelligence in History Education” from the American Historical Association. Most people in Bluesky dismissed it outright, as accepting what should not be accepted in the first place: that AI is here to stay (I’ll note here that I hate referring to ChatGPT and tools of its kind as “AI,” which it is not, but that seems to be the terminology). On the other hand, I’ve seen a few responses lamenting that so many historians are dismissing AI out of hand, especially its possible uses in research (this I saw on a private forum of AHA members, so no link). I think both miss what the document is trying to address: what should educators, some of whom are going to be entering the classroom in two weeks, actually do about these tools now and as they exist in the world and are being used by our students? What practical advice might be helpful for instructors developing syllabi right now? Taken on those terms, I find its advice somewhat helpful, if occasionally less clear than it might be. It ends with a serious “wtf?” So some thoughts. (I also, as an aside, wish folks commenting on these kinds of documents would remember that they were produced by their colleagues who, I try to believe, deserve grace and the assumption that they are not shills for tech companies).

First, I read the document as premised on different assumptions than those animating AI-boosters in both tech and higher education. The recent Microsoft-produced list of professions most likely to be replaced by AI laughably included “historian” near the top, which of course simply means that whomever (or whatever) made the list doesn’t know what a historian actually does. As the “Guiding Principles” explains, “Generative AI tools risk promoting an illusion that the past is fully knowable.” The Microsoft list speaks to a more broadly shared misunderstanding about what historians actually do. Historians seek out new knowledge, interpretations, sources, and ideas; they do not simply recreate (as generative AI does) what is already there. The past does not exist independently of our interpretation of it, ready for us to simply discover. My department — prior to the advent of generative AI — redesigned our introductory history course to focus on precisely this point: teaching students that history is an interpretative discipline. Doing so, one might hope, will show the deep limitations of AI in doing the work of history.

When addressing some of these limitations, however, I wish that the “Guiding Principles” had been more forceful. Having read — and taught — a recent article describing AI “hallucinations” as “bullshit” it is worth asking whether it is worth using AI when it has a significant chance of doing so rather than “work[ing] to counter these hallucinations when they appear.” Rather, it seems to me, that AI tools might be best suited to use cases with a clear, user-defined dataset and/or for purposes of refinement and formatting rather than search and/or text generation. In my own life, I admit that I have found generative AI useful in making a schedule of habits and tasks that I had some trouble getting my head around and in planning a road trip, both of which involved me feeding it the data and it then working through a problem that would have taken me a great deal of time. Any tool that bullshits its results does not seem suited for the kinds of tasks we set ourselves or our students in our professional lives.

Third, I am sympathetic to why the “Guiding Principles” declare that “Banning generative AI is not a long-term solution” even as it has been my own solution thus far. On Bluesky, I’ve seen a number of comments arguing that the AHA has betrayed historians, with people saying that they’d blackball any researcher who submitted anything written with the help of AI, and that we should hold the line. A lot of this, I think, comes from a place of true distress at how tech companies have, without our consent, fundamentally changed our relationship to the internet, to research, to writing, and, most importantly, to our students. But I do not think, based on what I have read and seen, it is realistic to hope that this is simply going to go away or that the AHA is in a position to stop its spread. I agree that we should have clear standards about the use of AI in research (and I agree that no-one should be using it to write their articles), but that was not the purpose of this document. The tools are out there and basically every single one of our students is already using it.

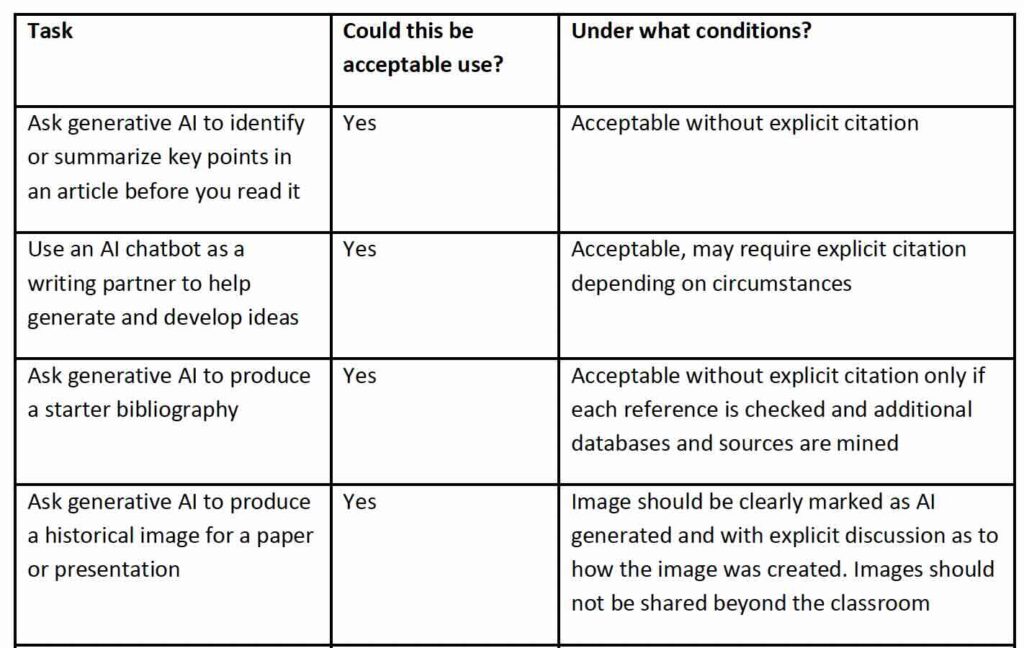

In that sense, finally, I am both annoyed and gratified by the practical advice the the “Guiding Principles” provides regarding the need for “concrete and transparent policies.” Breaking down the various ways that students are using these tools in the Appendix helped me better understand some of the questions I need to ask about my assignments and how I will approach AI conversations in the classroom. Indeed, while I do not think my policies will be changing have read the document — I still find the use of generative AI to be counter to the goals of my courses — I do think I can better answer students when they inevitably ask about specific use cases. For instance, I actually do not mind if a student uses AI to help them format a footnote. I already allow them to use (and use myself) citation managers and I see little difference in transferring that work (especially for a short paper) to a different tool. I appreciate being prodded to think clearly about the various ways that students are going to use these tools so that I can formulate a response and a policy in advance.

What concerns me, and I think this is where the Committee needed to really rethink their approach (my “wtf?” moment), is that the document does not just provide a sample template for an AI policy, but also provides one that is already filled out. I do not think this was the intent of the Committee, but it reads as recommendations for an AI policy, rather than an example of a completed syllabus policy. And so, when readers come across an AHA-branded document that claims that it is acceptable to “ask generative AI to identify or summarize key points in an article before you read it,” people are rightfully alarmed. One of the points of a history class is to read the article. Even worse is suggesting that it is ok for students to generate a historical image, which seems wildly inappropriate even if the student cites such use. Such language has been circulating on social media and is shaping how people are responding to the document as a whole.

I, by and large, hate these tools. I hate how Google is basically unusable now. I hate how tech bros think they know better than those with expertise. I hate how these companies have reshaped our world without our consent. I hate how the actual use cases for these tools seem much more narrow than people think. I hate how the widespread use of these tools is going to lead to a much dumber world, where new ideas have much more difficulty getting out there. But I also don’t think I’m in a position to stop it. Instead, we need new strategies to get students (to say nothing of the broader public) to value the purpose of learning itself, to get excited about the process, and to recognize the importance of the skills that AI-boosters claim (read: lie about) will be replaced. I am doubtful that the AI bubble is going to just burst, as I see some people claim on social media. I hope I am wrong, but am planning for being right.